Optimizing Quickbase Admin Console Connected (Sync) Tables for Effective Governance

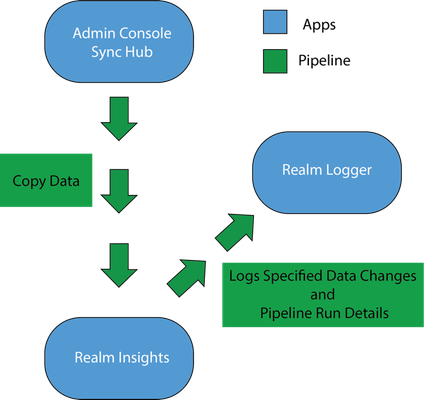

Approximately 5-minute read In the ever-evolving landscape of data management,Quickbase's Admin Console Connected (ACC) tables (known also as Sync tables) stand out as a pivotal tool for administrators. These tables are more than just a feature - they represent a methodology for streamlined data governance and management. This blog post delves into the strategic setup and utilization of ACC tables, guiding you through a journey of efficient administration and governance of your Quickbase realm. The Governance Starter Kit To establish a comprehensive governance framework, realm admins need to create three core applications. These three apps lay a solid foundation, which allows for easy expansion and subsequent modifications. Step 1 - Create an app called Admin Console Sync Hub: This is the centralized location for all ACC tables and is crucial for warehousing data points that the realm admin will use in the Realm Insights app. Less is more in this app. It is not advised to add relationships, reports, roles, users, etc. This is essentially a data repository that updates according to the app manager's specifications. ACC Tables currently include Users, User Access, and Applications. It is important to create this foundation to prepare for future tables that will be added. Step 2 - Create an app called Realm Insights: This is where the Realm Admin will slice and dice all realm data to get a holistic understanding of the realm and its overall health. It shows how Apps and Users are being utilized and what they interact with in the Realm. It will also help develop, enforce, and maintain policy with Quickbase and ensure alignment with your company IT (Information Technology) strategy. Step 3 - Create an app called Realm Logger: An advanced audit log that tracks specific attributes with a defined granularity. Some examples of data tracked would be App Deletions, User token Additions, User token changes, User Access additions/removals App Manager changes, etc. Through the utilization of Pipelines, this app creates logs from changes in data in the Admin Console Sync Hub app and the Realm Insights app. Although some of these attributes may be tracked in Audit Logs, this methodology allows flexibility and granularity based on the need of the client’s organizations. Admin Console Sync Hub Overview It is important to note that each ACC table should come with a Pipeline. The Pipeline has multiple purposes. It copies data from the Admin Console Sync Hub into Realm Insights. It also logs its results in Realm Logger and changes/additions/removals of the data that was interacted with. ACC Table Pipeline Process to log a Deleted App ACC Table of Applications updates once a day. When the table updates (or at a scheduled time), the Apps Pipeline fires. It updates the Realm Insights Applications Table with the updated data. The Pipeline then compares the updated data and removes all records (apps) that are no longer in the Apps Table of the Admin Console Sync Hub. These records are then added to the Realm Logger Apps Deleted Table. Now the Realm Admin can see all App deleted in the Realm and the attributes they choose to maintain. Separation for Simplification A common question is why this solution keeps the Admin Console Sync Hub and its ACC tables separate from Realm Insights. While integrating directly into Realm Insights is possible (and often necessary for certain API integrations), maintaining separate entities simplifies app management. This separation prevents the need for re-architecting Realm Insights with each new ACC table addition (read about our expansion plans below), enabling a more streamlined update process through pipelines. This method has proven easier to scale and allows the client to build the solution as they see fit. There are a wide range of building skillsets, and technically, you may not want a pipeline per ACC Table. You may want to use one pipeline or twenty. The point is to prepare for scale and keep applications limited in roles and scope so that a “Franken-App" does not occur. User Token Added as ACC after a Few Months Example: A Realm Admin imports a User Token file from Admin Console on a weekly basis in a Table labelled “User Token” in Realm Insights. In a few months, the Realm Admin has been told a new User Token ACC Table is going to be made available. There are many reports, workflows, and relationships associated with the User Token table in Realm Insights. Once the User Token ACC Table is made available, the Realm Admin can create a pipeline to copy the data into the Realm Insights app. NOTE: All Quickbase plans include audit logging, which is accessed from the Admin Console. If you decide to set up your own audit table in the Realm Logger app, this can be a great tool to facilitate custom reporting and notifications. However, any audit records saved to a Quickbase app can be modified or deleted if the roles in the app allow this. That means the Realm Logger may not meet your needs if you plan to use it for compliance purposes. To determine the right approach in that case, please make sure you consult your legal team first. Use Cases Here are some examples of some of the data insights you can gain by just leveraging the three ACC tables that exist now: Users – Users in the Realm How many non-company emails are being used in the realm Anytime a user changes permissions: Can Create Apps Realm Approved Realm Admin Super User Pipeline create permissions App Admin Can Create User Tokens Non-Realm Approved Employees Users to Deny (based on length of time not accessed) Users that have Never Accessed an app, but still have Access Access – Users and their app access # of App Access per User App Level Permissions per User per App # of Apps Accessed per specific timeframe Apps – Apps in the Realm How many applications have not been accessed in 90/180/240/360 days # of Apps per App Manager Apps Created per Year Everyone on the Internet Apps Apps with or without Vendor Access Anticipating Future Expansions Quickbase's commitment to growth is evident with the planned introduction of new Admin Console connected tables, encompassing User Tokens, Pipelines, Groups, Tables, Pipeline Access, and Solutions. These additions, expected throughout 2024, highlight the need for a robust foundation to facilitate easy scaling. Conclusion: The ACC tables are more than just a single feature. They are a cornerstone of effective Quickbase governance. By understanding and implementing these strategic practices, you can transform the way you manage your realm, laying a foundation for growth and efficiency. As more data points become available, scaling becomes much easier as you mature with the product and the product adds additional ACC Tables and APIs. Explore more about connecting ACC tables in our help center article. For in-depth information and if you need further assistance, don’t hesitate to reach out to your sales or service support contacts.499Views2likes0CommentsPipeline to remove file attachments

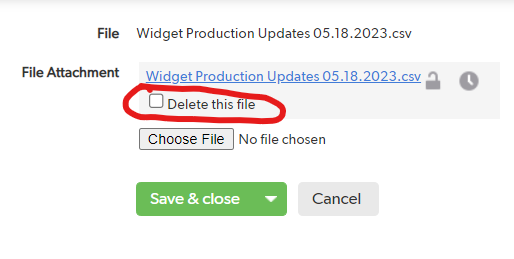

I am trying to investigate if there is a way to use a pipeline to remove file attachments? I am automating a process to incorporate data into our system using CSV uploads. The issue is that I would like to keep some of the information from the upload available (e.g. the Requestor, Date Uploaded, File Name) but I don't want to actually archive the file on QuickBase due to space limitations. Is there a way to just delete the file itself without deleting the entire record? You can of course click into the file and manually delete it (see below), but I want this to be automated as I won't be doing the uploads myself. The field type is "file attachment" and it appears to be stored as a URL/Link. It does not behave like other fields in the pipeline and I have not found a way to override it yet. Thanks! ------------------------------ Kevin Gardner ------------------------------209Views0likes11Comments(Pipelines) Get Users in Role / Process XML from HTTP API

Hello, any help/advice would be much appreciated. I'm trying to send a reminder email to users in a specific Role, in a specific App, using Pipelines. As far as I can tell, there is not a JSON RESTful API call that does this (Get Users only returns all users for an App, with no info on Roles). However, API_UserRoles returns each user from an app with what Roles they have. In theory, I could somehow loop over this and send the email to only those users with a specific role. I can successfully use the Quickbase Channel -> 'Quickbase APIs' -> 'Make Request' step to call API_UserRoles and get this data. Here's where I run into trouble: How do I process this XML into a form that another step could use (e.g. loop over it and send emails)? I found this question: "To capture an XML response from an API in Pipelines" but I can't seem to figure out how get {{a.json.qdbapi.value}}. When I try to view its contents (emailed to myself) it is blank. There isn't any "Result" field or something like that from this request step available in subsequent steps. Only URL, Method, Headers, Content Type, & Body. For instance, if I want to get the JSON out of the XML (using {{a.json.qdbapi.value}}) with the JSON Handler Channel -> 'Iterate over JSON Records', the 'JSON Source' field states 'No json sources in the pipeline' Thank you for any help you can offer, ~Daniel ------------------------------ Daniel ------------------------------200Views0likes8CommentsPipeline Make Request - Return Make Request JSON Output into fields

** Update: I managed to retrieve the ticket ID by using {{c.json.ticket.id}} The only one which remains is to do the same for the users, though that is not reading the value when formatting the jinja the same as the ticket request, even though the structure of the .json is the same. Hi Everyone, I have a Make Request, which has an endpoint which is pointing to our Zendesk instance: https://instance.zendesk.com/api/v2/users/search.json?query=email:{{a.email}} As you can see above, I am using the email address which is stored in the record field a.email - this checks if this end-user exists on Zendesk. After running the pipeline, it returns the following in the Output (I've redated extraneous data) { "users":[ {"id":13617301225116, "url":"https://instance.zendesk.com/api/v2/users/13617301225116.json", "name":"Nick Green", "email":"redacted@gmail.com" ]} extra data redacted } In the above users array it has the "id":13617301225116 value which I would like to send back to the Quickbase record to populate a text field by using an Update Record action in the pipeline. I use jinja in an attempt to extract the specific value: {{c.content.users.id}} - however this returns a null value. When sending the entire output to the field by just using {{c.content}} I get the proper json structure, though for some reason it seems that jinja is not parsing the returned output to extract the "id" value. Using {{c.content.users.id | tojson}} doesn't work and returns an error: Validation error: Incorrect template "{{c.content.users.id|tojson}}". TypeError: Undefined is not JSON serializable I also checked in with ChatGPT and it recommends using {{c.content.users.id}} Has anyone been able to successfully do the above? Cheers, NickSolved199Views0likes2CommentsPipeline Iterate over JSON nested data

I was pulling in JSON data from Ground Control and was having some trouble pulling in some nested data while creating a record. Here is how I solved it. I hope I can save some people time. Used the method posted here to get this started: JSON Handler details Data initially pulled was not an issue at the top level. Nested inside my data is "customFieldValues". Sample: "customFieldValues": [ { "name": "SomeFieldA", "value": "Abcdefg" }, { "name": "SomeFieldB", "value": "1234567" }, { "name": "SomeFieldC", "value": "CwhatIDidThere?" } ] To pull in this value I needed to use a raw_record Jinja expression and state the location in the array. {{b.raw_record['customFieldValues'][0]['value']}} 0 is used since this is the first location in the Array. (This assumes that b. is the reference used for the other fields such as {{b.status}} ) This is placed in the field reference in Create Record step for SomeFieldA. ------------------------------ James Carlos ------------------------------199Views0likes3CommentsLooping through field values in Pipeline

I have a record that contains a text field with multiple values separated by commas (which I can also turn into a multi select field if that makes things easier). I want to loop through the values in the field and within each loop perform a QB record update. In pipelines, I understand how I can perform a loop based off a list of records, but in this case I need the loop to be based off a list of values in a field.... any way to do this? Thanks. ------------------------------ Jennason Quick Base Admin ------------------------------199Views0likes21CommentsMIME Pipeline issue

Hi, pipeline guru's We suddenly started getting the following pipeline error to an email template that we have in the application, but there have been no changes made to the template or form, but its preventing the email being sent to the user. Any suggestions on what we should be focusing on to resolve this? Remote service reported an error: A supported MIME type could not be found that matches the content type of the response. None of the supported type(s) 'Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaType, Microsoft.OData.ODataMediaTyp...' matches the content type 'application/x-www-form-urlencoded'.121Views1like9CommentsWhen the Expiration Date is On or Before Today, Change the Contract Status to Expired

Hello: I have a contract application. Goal: When the Contract Expiration Date field is on or before today, and the Exception to the Expiration Date Dynamic Form Rule field is unchecked, I want theContract Status field to automatically change to Expired. Question: What is the best method to accomplish the above goal, form rule, text formula, or pipeline? 1. I tried the below form rule. However,it works intermittently. I still see expired contracts that show as active 2. I created a formula - Text field named Contract Status Formula, but I am unsure whether my formula is correct. If( Today() <= [Contract Expiration Date], "Expired") 3. I tried to create apipeline. My first attempt was to Search Records/Update Record. My second attempt was to On New Event/Search Records/Update Record. However, I know I am missing a step. Any step-by-step guidance would be greatly appreciated. Thank you, RoulaSolved119Views1like12CommentsTime of Day field values are not in 24-hour format in Pipelines

Hi all. I think I found a bug with Pipelines where using a Time of Day field isn't in 24-hour format even when you set the field settings to 24-hour. Can anyone think of a possible workaround? Most APIs use 24-hour format so having Pipelines only output in North American format makes it impossible to pass along the correct time format. Steps to reproduce: Create a Time of Day field. In field settings > Value display, enable 24-hour clock. Create a pipeline that sends a value in this field. Pipelines outputs the field in North American time format (for example, 5:00 PM), instead of 24-hour format (ex: 17:00). I tried using Jinja to reformat to 24-hour, but Pipelines doesn't support the jinja filters needed to re-format to 24-hour time. Here's the Jinja expression I tried: {{ time-field|replace(" AM", "").|replace(" PM", "")|stringptime("%I:%M")|strftime("%H:%M") }}Solved100Views0likes4CommentsPipelines Advanced Query

The goal is to build an Advanced Query in a Pipeline that will compare two User Fields in the record. I am looking for the Record Owner to match the User in FID 9. The query that will not work is {'4'.EX.'9'} What the query says is Record Owner equals 9 not the value of the User in FID 9. Searching for the syntax to get this right has been fruitless. Anyone know a solution? ------------------------------ Don Larson Paasporter Westlake OH ------------------------------100Views1like10Comments